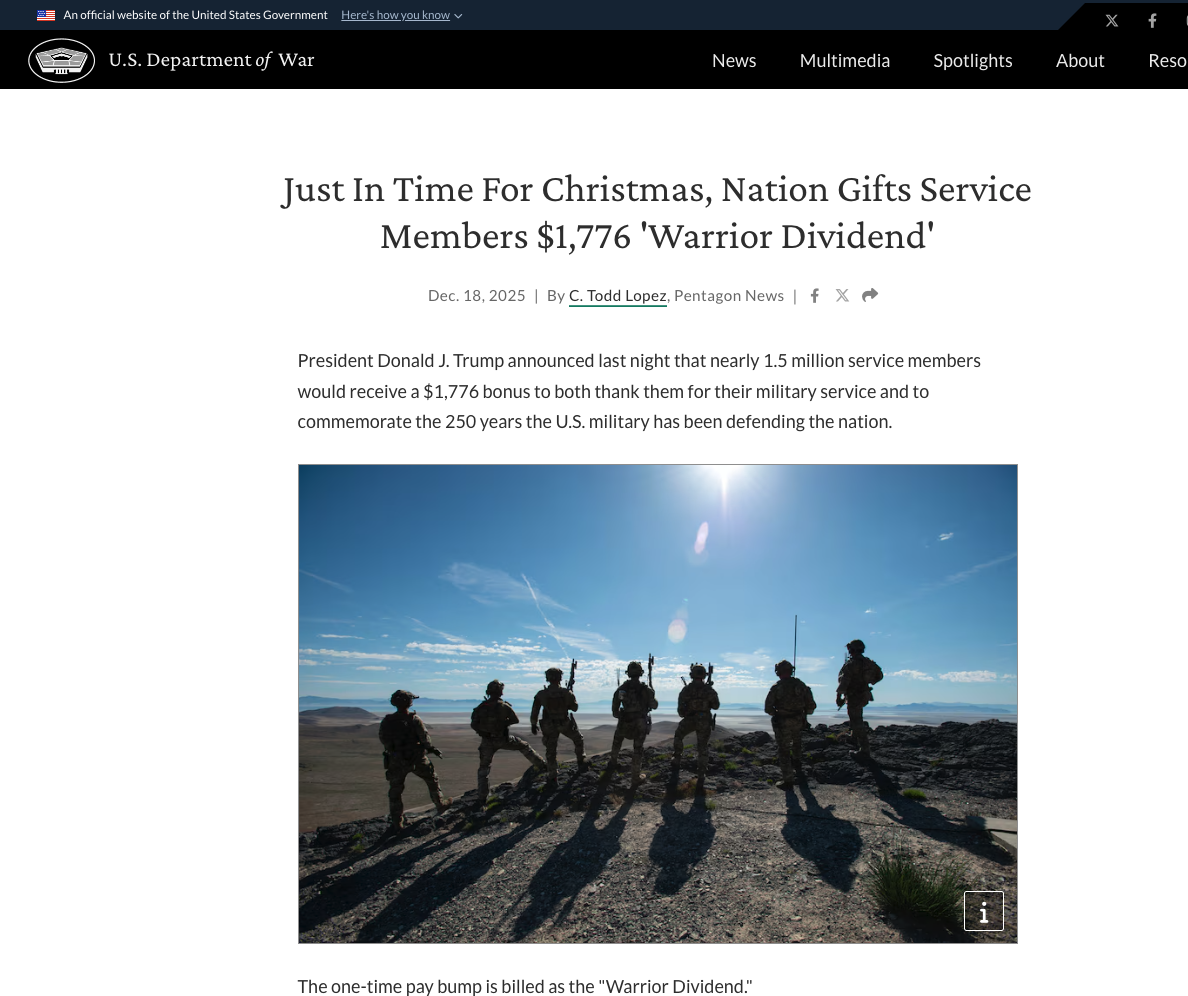

On December 17, 2025, a public announcement from President Trump referencing a $1,776 “Warrior Dividend” for U.S. veterans quickly gained traction across news platforms and social media. Within hours, something far more concerning unfolded beneath the surface of search results.

Bolster AI’s research team observed a sudden surge of low-credibility websites publishing near-identical articles, all claiming to explain eligibility, payment timelines, or “official confirmation” of the benefit. What appeared to be organic public interest was actually a coordinated SEO content-farming operation designed to monetize attention at scale.

From Legitimate News to Search Engine Manipulation

In the immediate aftermath of the announcement, dozens of newly registered or previously dormant domains began publishing articles optimized for search visibility rather than accuracy. These pages were aggressively tuned with keywords like “$1,776 payment,” “warrior dividend confirmed,” and “veterans benefit update.”

The result was predictable: content farms quickly out-ranked authoritative sources, users searching for clarification were funneled into ad-heavy pages, and legitimate government or major media coverage was pushed down in search results.

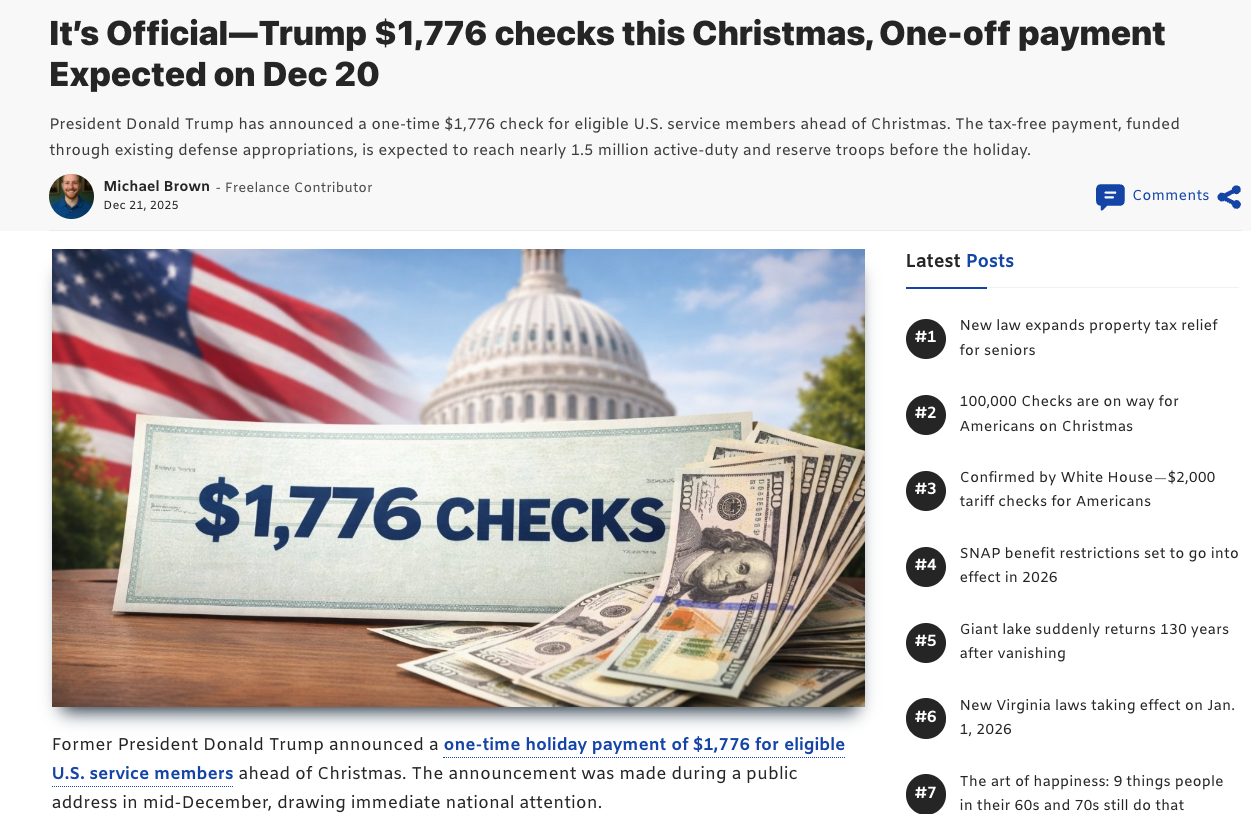

During the third week of December 2025, Bolster AI tracked this pattern across multiple domains that shared identical page layouts, publishing cadence, and internal linking behavior. These are clear indicators of automation rather than journalism. Many of these pages relied on catchy, emotionally charged keywords embedded directly in the headline, designed to maximize click-through rates rather than provide accurate or verified information.

AI-Generated Articles and Synthetic Credibility

A closer review of these pages revealed extensive use of AI-generated text, often padded with repetitive phrasing and vague references to “official sources” without citations. Many articles appeared coherent on the surface but offered no verifiable details: no official agency links, no policy documentation, nothing to substantiate the claims.

Equally deceptive were the visual elements:

- AI-generated featured images showing military symbolism or patriotic themes

- Stock-like author photos tied to fake journalist profiles

- Generic bios reused across multiple unrelated websites

These content-farming infrastructures routinely rely on dummy author identities, using fabricated names and AI-generated profile images to create a false sense of legitimacy. The absence of any verifiable background information around these authors represents a significant credibility red flag.

Fake Authors Exposed Through Reverse Image Search

To validate author authenticity, Bolster AI collected profile images from these articles and conducted reverse image searches using Google Images, TinEye, Yandex Image Search, and Pinterest Visual Search.

Across all platforms, the results consistently failed to surface any authentic references tied to these individuals. No professional profiles, no social media presence, no published work history, and no legitimate affiliations. This strongly indicates these author profiles were artificially created solely for content-farming purposes.

In one instance, Bolster AI identified the same author image appearing on texasfosteryouthconnections[.]org. When the author, listed as “Michael Brown,” was reverse-searched, the identical image appeared on another unrelated domain, ffesp[.]org. Both sites shared identical layouts, menu structures, visual themes, and author profile formats, alongside aggressive clickbait advertising placements. This level of uniformity confirms they are part of a centrally operated SEO content-farming infrastructure designed to mass-produce monetized misinformation.

Seasonal Exploitation: Christmas-Themed Misleading Content

Bolster AI also identified another highly optimized article that blended partial truth with misleading claims. This is a common tactic used to manipulate search visibility while spreading misinformation.

This article surfaced prominently in Google search results with the headline “$100,000 checks are on the way for Americans this Christmas.” The wording was intentionally crafted to attract maximum attention, especially from U.S. citizens actively searching for holiday-related financial assistance.

Upon verification, Bolster AI confirmed the claim was misleading. The actual program applied only to a limited group of eligible residents in Pennsylvania, not the broader U.S. population. By omitting this critical context, the content created a false impression of a nationwide payout, effectively deceiving readers at scale.

This pattern highlights a core tactic: leveraging seasonal events and emotionally charged topics to inflate click-through rates. The primary objective is maximizing advertising revenue through exaggerated headlines, not information accuracy.

Official Coverage vs. Content Farming: A Stark Imbalance

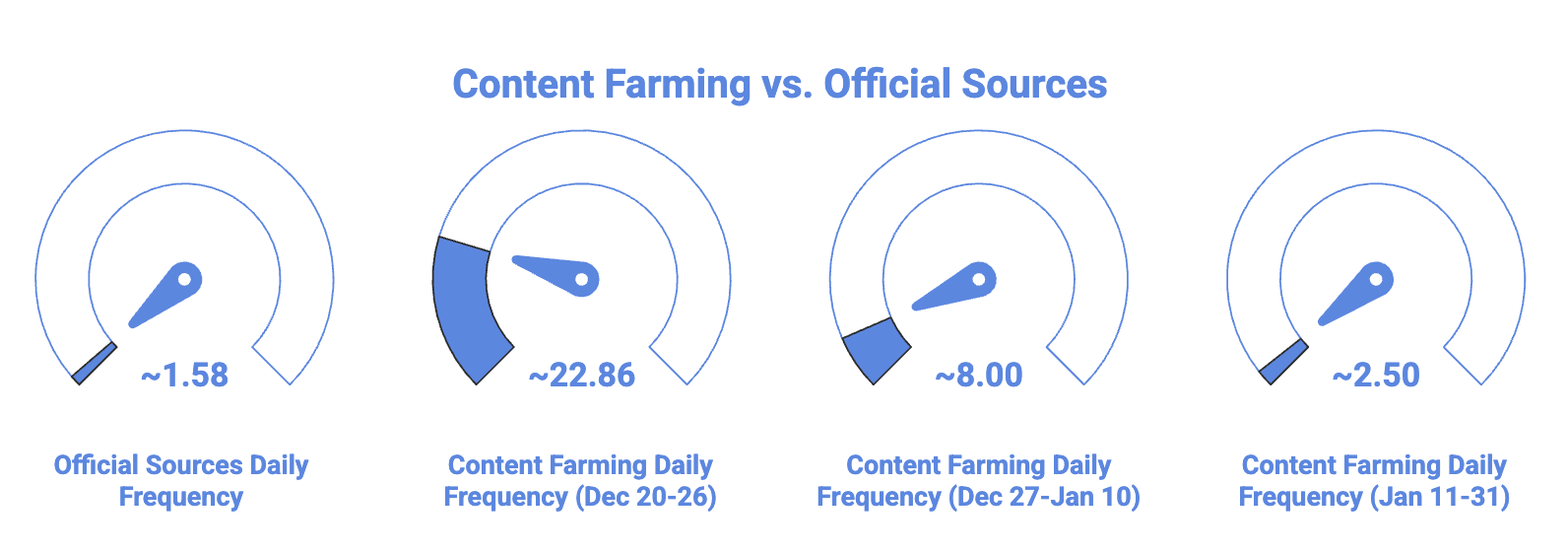

Between December 17, 2025 and January 31, 2026, Bolster AI reviewed reporting from official government sources and established news organizations, including U.S. military websites, federal portals, and major media outlets. Across this entire period, we identified 73 well-researched, information-rich articles prioritizing accuracy over speed.

Content farming activity told a different story.

Within just seven days of the announcement (December 20–26), the research team detected 160 content farming articles targeting the Warrior Dividend narrative. These pages offered minimal factual detail, relied on clickbait headlines, and were aggressively monetized with ads. After the initial surge, publication stabilized at approximately 8 articles per day until January 10, then declined to 2–3 articles per day as operators shifted to newer topics. Many domains were abandoned or taken offline once SEO value dropped.

The Numbers Tell the Story

Across this single government scheme, the research team identified 322 content farming articles compared to just 73 official and reputable articles.

Navigating the news became a game of “spot the bot.” Content farms produced more than 4.4 times the volume of reputable reporting. A reader was nearly five times more likely to land on a low-effort content farm than an official source.

Why This Matters

This pattern demonstrates how content farming infrastructures can rapidly exploit public trust, overwhelm search results, and distort access to accurate information during sensitive policy announcements involving financial benefits.

The Warrior Dividend case is a clear example of how content farming doesn’t just chase traffic. It actively competes with official narratives, often winning visibility in the critical early days after an announcement.

Why Content Farming is Not “Harmless SEO Spam”

Content farming is often dismissed as a nuisance rather than a threat. That perception is outdated.

While these pages may not immediately steal credentials or deploy malware, they distort public understanding of government programs, spread partial or misleading information, and condition users to trust low-quality sources.

In the case of the Warrior Dividend, some blogs implied guaranteed nationwide payments, while reputable outlets clarified that discussions centered on potential benefit adjustments, not universal disbursements. This erosion of trust is cumulative and dangerous.

A Preview of Election-Year Abuse in 2026

The timing of this campaign is especially notable. As the 2026 U.S. midterm election cycle approaches, government-related topics like benefits, taxes, veterans’ programs, and healthcare will continue to dominate public search interest.

Content-farming operators are well positioned to exploit election-related announcements, push misleading narratives into search results, and monetize confusion before corrections can surface. What begins as ad-driven SEO abuse can rapidly evolve into large-scale misinformation, particularly when amplified through social platforms.

Organizations looking to protect their brand from exploitation in similar schemes should consider social media threat intelligence as part of their defense strategy.

How to Spot SEO Content-Farming Blogs

For everyday users, identifying these pages is increasingly important. Common red flags include:

- Unknown websites ranking unusually high for breaking news

- Headlines that promise certainty (“Payment Confirmed,” “Money Sent”) without sources

- Excessive ads, pop-ups, or auto-playing videos

- Vague references like “according to reports” with no official citations

Legitimate sources typically link directly to government agencies or primary documents, provide author credentials with verifiable history, and avoid sensational headlines.

Final Takeaway

The $1,776 Warrior Dividend campaign illustrates how search engines have become a battleground—not just for visibility, but for trust.

Content-farming operators are no longer chasing clicks alone. They are shaping what people see, believe, and share. As election-year narratives intensify in 2026, the line between monetization abuse and misinformation will continue to blur.

Awareness, attribution, and timely intervention are the only way forward to protect your organization.

Bolster’s AI-powered platform helps organizations detect and take down fraudulent content, phishing attacks, and brand impersonation at scale. From domain monitoring to dark web threat intelligence, we provide comprehensive protection against external threats. Request a demo to see how we can protect your organization.