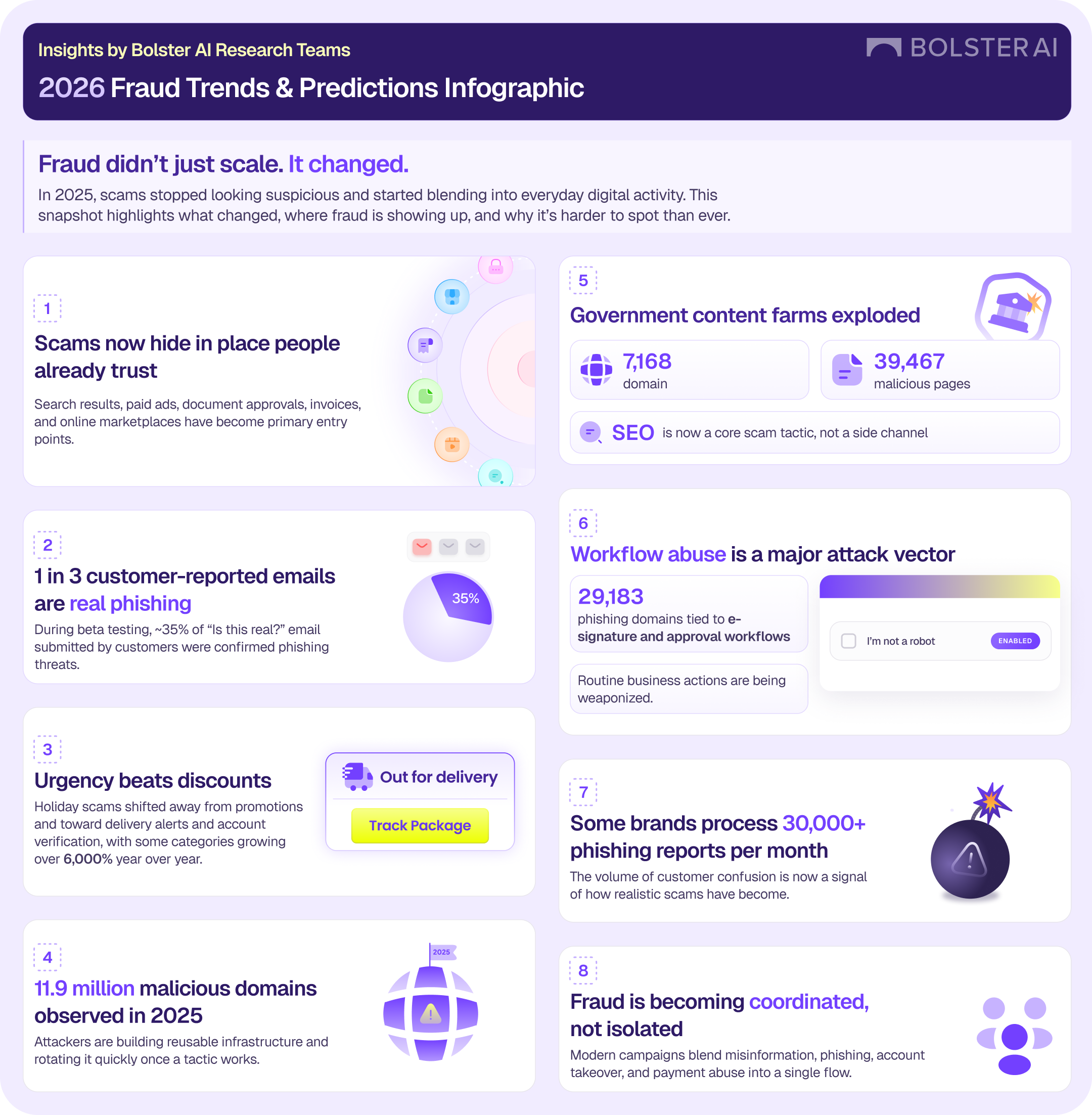

Over the past year, my team and I kept running into the same uncomfortable realization: fraud wasn’t behaving the way most people still talk about it.

We weren’t just seeing more phishing attempts or new variations on familiar scams. We were seeing fraud show up in places where users normally don’t expect to second-guess what they’re looking at. Messages tied to document approvals. Login prompts that looked like everyday work. Pages surfaced through search or ads that felt legitimate enough to pause rather than panic.

What stood out wasn’t that people were falling for obvious scams, it was that many of them weren’t sure what they were looking at anymore.

That uncertainty showed up most clearly through customer abuse mailboxes. Large brands were receiving tens of thousands of emails each month from customers asking a simple question: “Is this real?” When Bolster AI analyzed those submissions, about one in three turned out to be confirmed phishing. The rest weren’t harmless either. They lived in a gray area where the message looked believable enough to cause hesitation, even if it wasn’t ultimately malicious.

That shift matters.

It tells us that fraud is no longer just about deception, it’s about placement. Attackers are deliberately putting scams inside systems and workflows people already use and trust, and they’re doing it repeatedly, not as one-off attempts.

As we traced these campaigns back, another pattern became hard to ignore. They weren’t improvised; infrastructure was being set up well in advance. Campaigns were timed around predictable moments like renewals, enrollment periods, payment issues, or seasonal events. When something worked, it was reused and scaled.

What we were looking at felt less like isolated attackers and more like organized operations that understood how to move users through a process.

That’s ultimately why we decided to publish this report.

The goal wasn’t to create dramatic predictions or chase headlines. It was to document what we actually observed across 2025 and explain why those patterns point to a meaningful shift in how fraud operates. When scams blend into normal digital activity, they become harder to detect, easier to repeat, and far more effective at scale.

I’m proud of this work because it reflects what our research team is seeing every day, not what we wish the threat landscape looked like. And I think it’s important that security and fraud teams understand that defending against modern fraud requires looking earlier in the lifecycle, before a message ever reaches an inbox or a user is asked to make a decision.

If you want a deeper look at the data and patterns behind these observations, I encourage you to read the full 2026 Fraud Trends and Predictions report. It lays out what changed in 2025 and why those changes should shape how organizations think about fraud moving forward.

Infographic